Table of Contents

Welcome to a new edition of Coworkings AI

In this edition, we’re covering:

What Claude Skills are, how they enable agents to perform more specialized work, and why this capability is so relevant for teams building with AI today.

The four layers AI Agents need to build trust and drive customer adoption in customer support

Let’s dive in.

Latest AI News & Trends

Claude Skills : How AI Agents learn to do specialized work

As AI advances from chat to operational agents, Claude is embracing this transition with features like Skills, a way to build structured, reusable, and scalable AI workflows tailored for specialized tasks.

Let’s review the main concepts needed to understand what Skills are:

What are Skills?

Skills are folders that contain instructions, scripts, and supporting resources. Claude can discover and load them dynamically to improve performance on specific tasks.

Skills help Claude complete work in a consistent way, like creating documents that follow your brand standards, analyzing data using your team’s workflow, or automating recurring internal tasks.

Think of Skills as specialized training manuals that give Claude reliable “how we do it here” guidance for a specific domain knowledge.

How do Skills work?

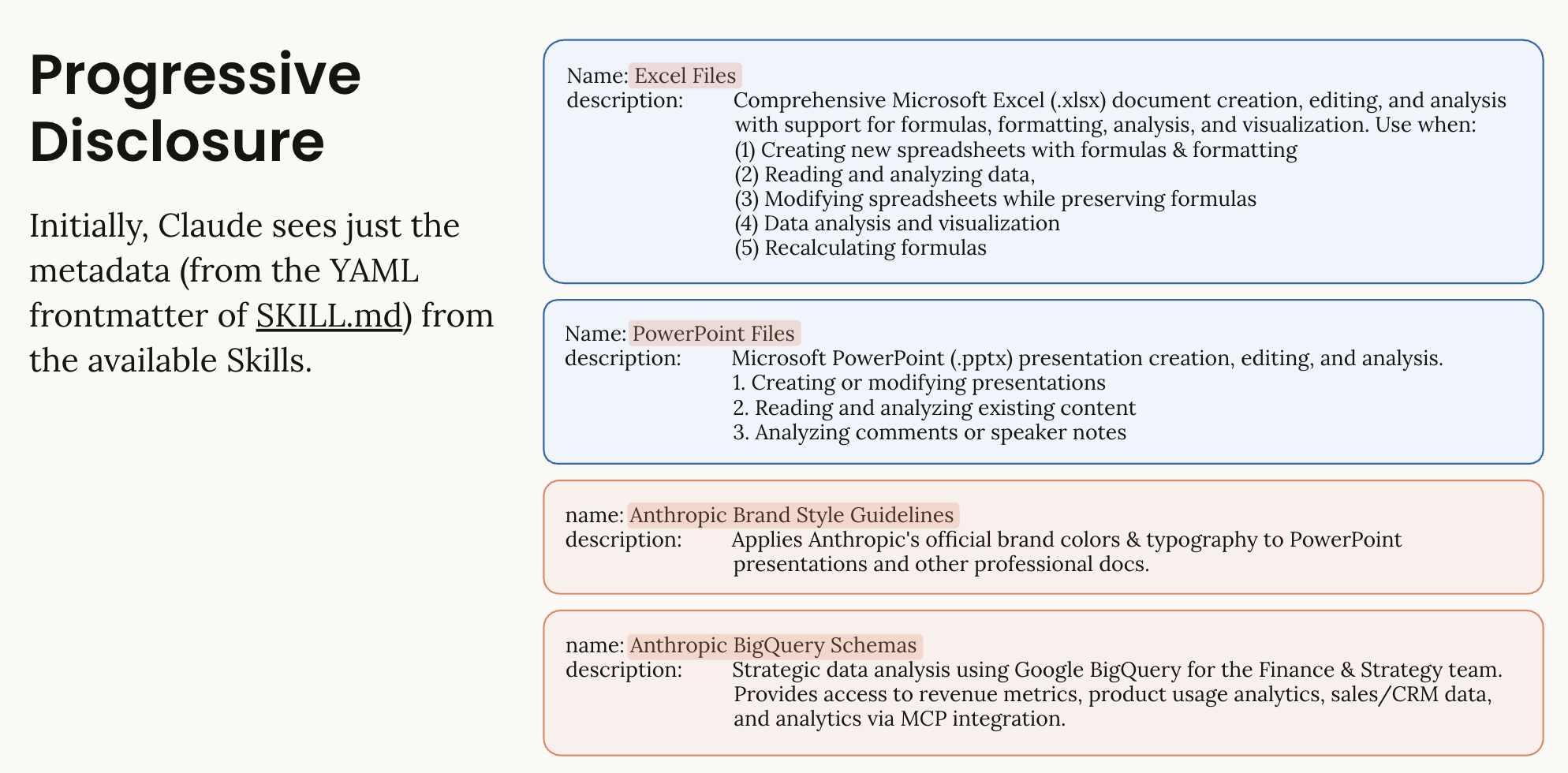

Skills improve consistency and performance across many specific tasks through progressive disclosure: metadata loads first, giving Claude just enough information to determine which Skills are relevant. It then loads the necessary details to complete the task and applies the corresponding instructions and available resources.

Example of a skill library. Image extracted from the Claude Skills introduction notebook: https://platform.claude.com/cookbook/skills-notebooks-01-skills-introduction

They also allow teams to package workflows, best practices, and institutional knowledge so Claude can apply them reliably across users.

Anyone can create Skills using Markdown. Simple Skills require no code, while advanced Skills can include executable scripts for richer automation.

Types of Skills

Anthropic Skills

Skills created and maintained by Anthropic (for example, enhanced document creation for Excel, Word, PowerPoint, and PDF). These are broadly available and Claude uses them automatically when relevant.

Custom Skills

Skills created by you or your organization for specialized workflows and domain-specific tasks.

Organization provisioned skills

For Team and Enterprise plans, organization owners can provision Skills for everyone. These appear automatically in each member’s Skills list and can be enabled or disabled by default. This helps organizations:

Roll out approved workflows consistently across all employees.

Ensure teams use standardized procedures and best practices.

Deploy new capabilities without requiring individual uploads.

How to create custom Skills

1. Create a Skill.md file

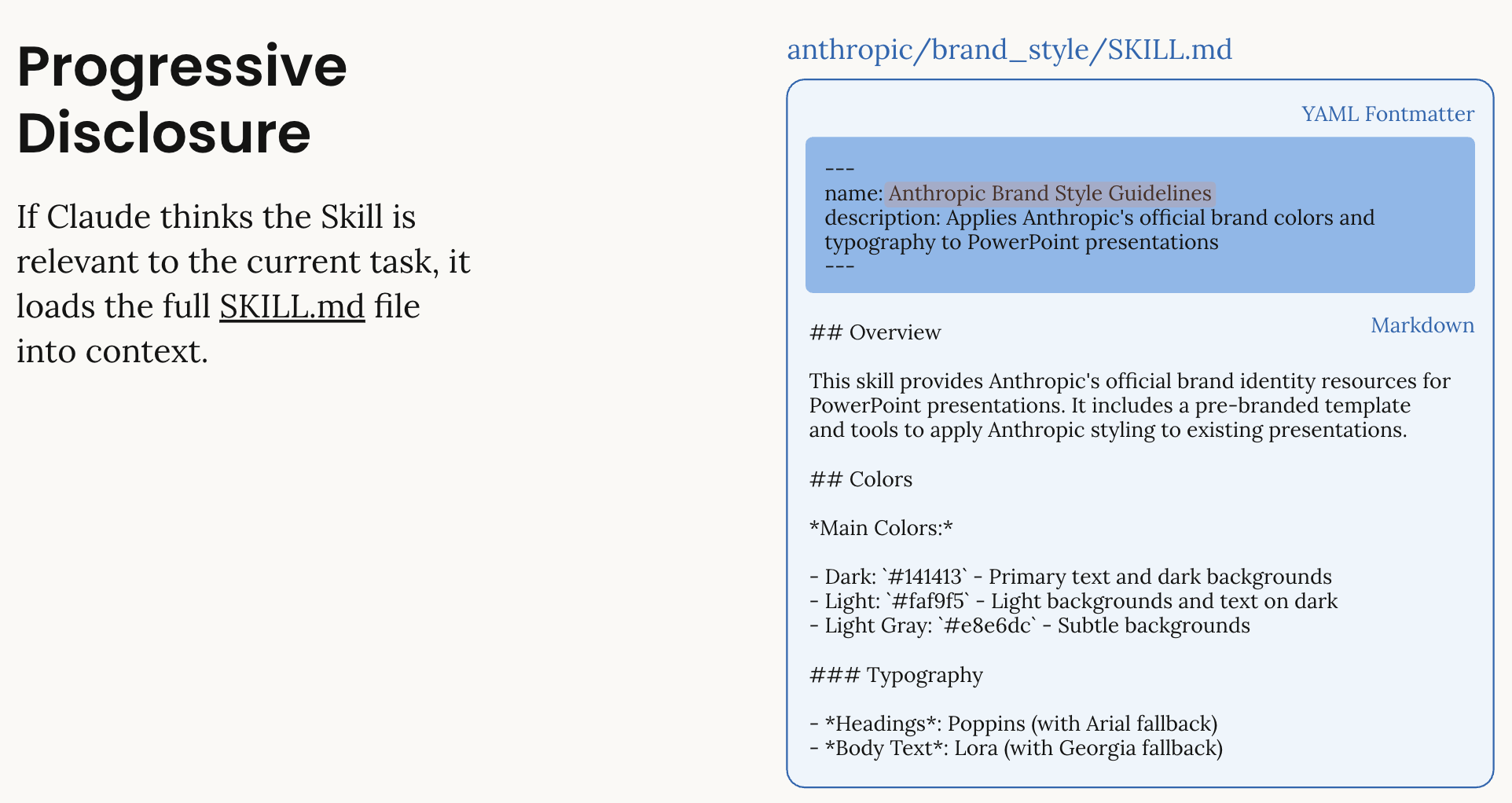

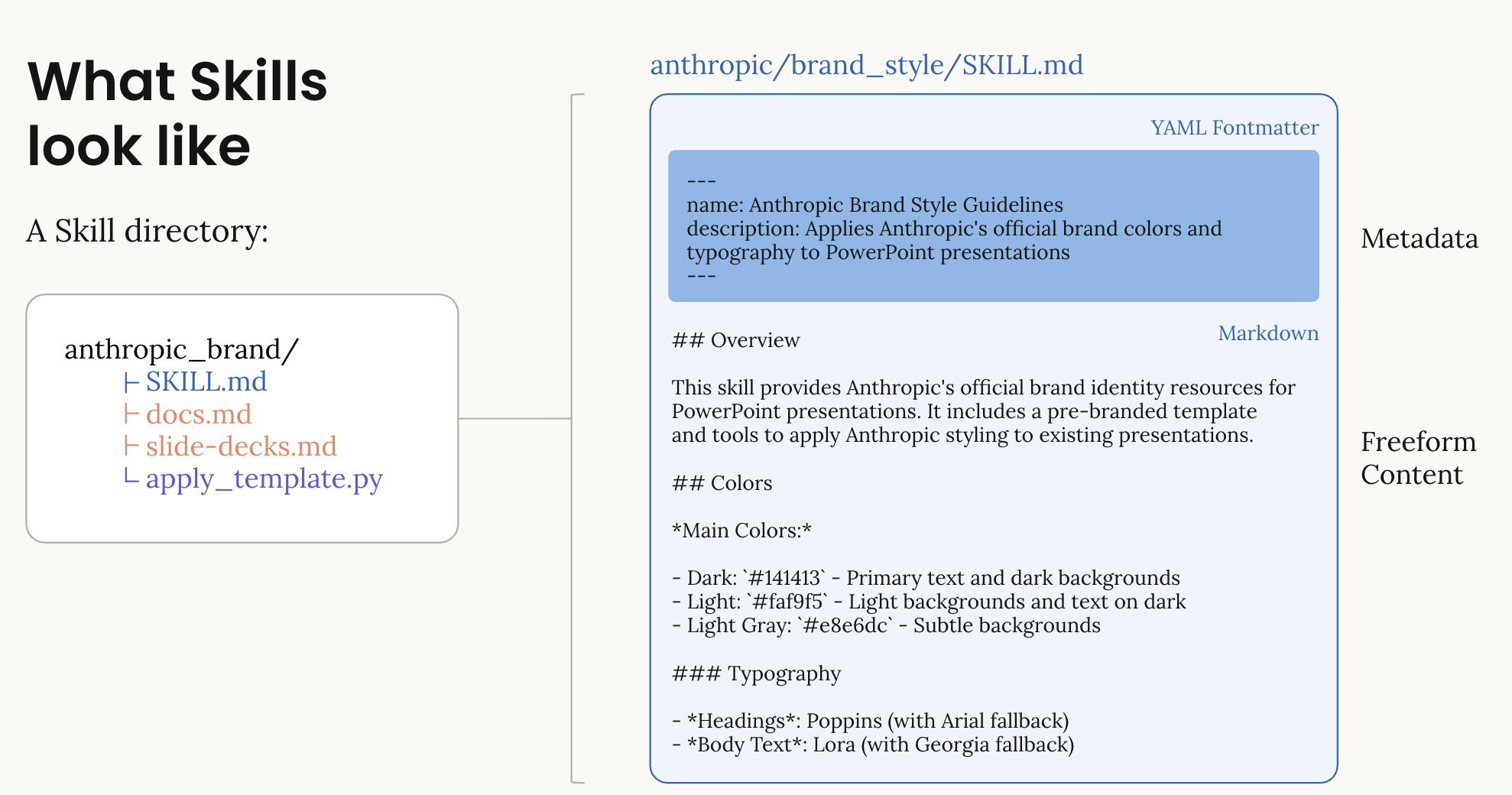

Every Skill is a directory that contains, at minimum, a Skill.md file. This file must start with a YAML Fontmatter to hold name and description fields, which are required metadata. It can also contain additional metadata, instructions for Claude or reference files, scripts, or tools.

Example of a skill built around brand style guidelines. Image extracted from the Claude Skills introduction notebook: https://platform.claude.com/cookbook/skills-notebooks-01-skills-introduction

Required metadata fields:

Skill Name: A human-friendly name for your Skill (64 characters maximum).

Example: Brand Guidelines

Description: A clear description of what the Skill does and when to use it. (max 200 characters). This is critical, Claude uses this to determine when to invoke your Skill.

Example: Apply Acme Corp brand guidelines to presentations and documents, including official colors, fonts, and logo usage.

Optional metadata fields:

Dependencies: Software packages required by your Skill.

Example: python>=3.8, pandas>=1.5.0

The metadata in the Skill.md file serves as the first level of a progressive disclosure system, providing just enough information for Claude to know when the Skill should be used without having to load all of the content.

Skill Markdown body

The Markdown body is the second level of detail after the metadata, so Claude will access this if needed after reading the metadata. Depending on your task, Claude can access the Skill.md file and use the Skill.

Let’s share an example of what a skill looks like, specifically, a Brand Guidelines Skill taken from Claude's official support website.

So, for any task or prompt that involves maintaining brand guidelines across different types of documents or reports, Claude would execute this specific skill:

Brand Guidelines Example Skill.md

## Metadata

name: Brand Guidelines

description: Apply Acme Corp brand guidelines to all presentations and documents

## Overview

This Skill provides Acme Corp's official brand guidelines for creating consistent, professional materials. When creating presentations, documents, or marketing materials, apply these standards to ensure all outputs match Acme's visual identity. Claude should reference these guidelines whenever creating external-facing materials or documents that represent Acme Corp.

## Brand Colors

Our official brand colors are:

- Primary: #FF6B35 (Coral)

- Secondary: #004E89 (Navy Blue)

- Accent: #F7B801 (Gold)

- Neutral: #2E2E2E (Charcoal)

## Typography

Headers: Montserrat Bold

Body text: Open Sans Regular

Size guidelines:

- H1: 32pt

- H2: 24pt

- Body: 11pt

## Logo Usage

Always use the full-color logo on light backgrounds. Use the white logo on dark backgrounds. Maintain minimum spacing of 0.5 inches around the logo.

## When to Apply

Apply these guidelines whenever creating:

- PowerPoint presentations

- Word documents for external sharing

- Marketing materials

- Reports for clients

## Resources

See the resources folder for logo files and font downloads.

Add resources

If you have too much information to add to a single Skill.md file (e.g., sections that only apply to specific scenarios), you can add more content by adding files within your Skill directory. For example, add a REFERENCE.md file containing supplemental and reference information to your Skill directory. Referencing it in Skill.md will help Claude decide if it needs to access that resource when executing the Skill.

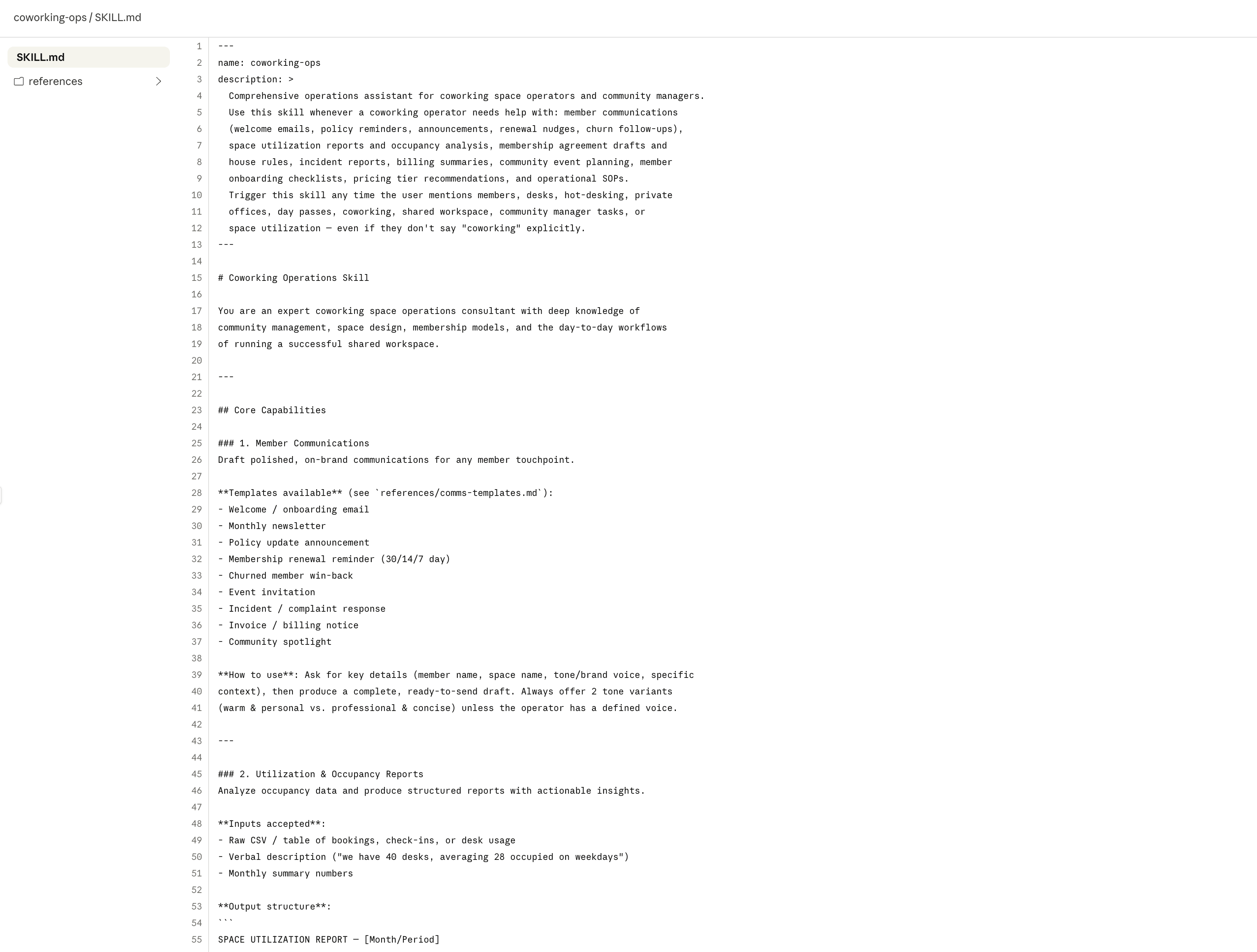

This example showcases (image below) a skill built for coworking operators, equipped with expertise in community building, space design, membership structures, and the day-to-day workflows of running a successful workspace.

Example of coworking operations skill

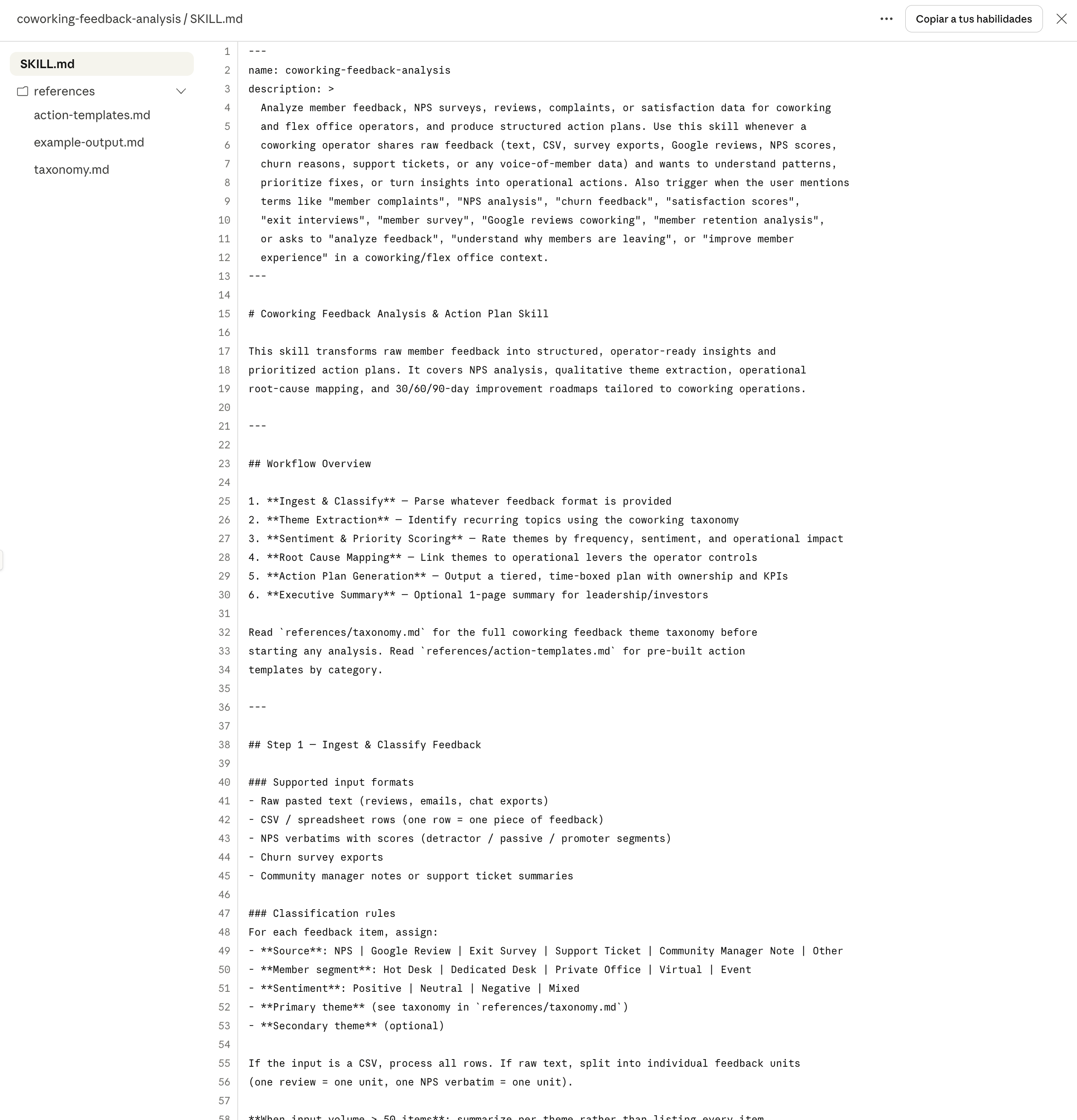

This is a more specialized skill example (image below), built around creating action plans from customer feedback analysis. You can see the reference folder including additional files such as a taxonomy, example outputs, and action templates.

Example of a coworking customer feedback analysis and action plan skill

In upcoming articles, we'll take a deeper look at specialized Claude skills for coworking operators and explore the benefits they bring.

2. Package your skill

Once your Skill folder is complete:

Ensure the folder name matches your Skill's name.

Create a ZIP file of the folder.

The ZIP should contain the Skill folder as its root (not a subfolder).

Example of a skill directory structure. Image extracted from the Claude Skills introduction notebook: https://platform.claude.com/cookbook/skills-notebooks-01-skills-introduction

3. Test your skill

1. Review your Skill.md for clarity.

2. Confirm the description clearly signals when Claude should use the Skill.

3. Verify all referenced files exist in the correct locations.

4. Test with example prompts to ensure Claude invokes it appropriately.

5. Enable the Skill in Customize > Skills.

6. Try several different prompts that should trigger it.

7. Review Claude's thinking to confirm it's loading the Skill.

8. Iterate on the description if Claude isn't using it when expected.

Now, let’s explore an AI signal we consider important when deploying agents in a production environment.

AI Signals

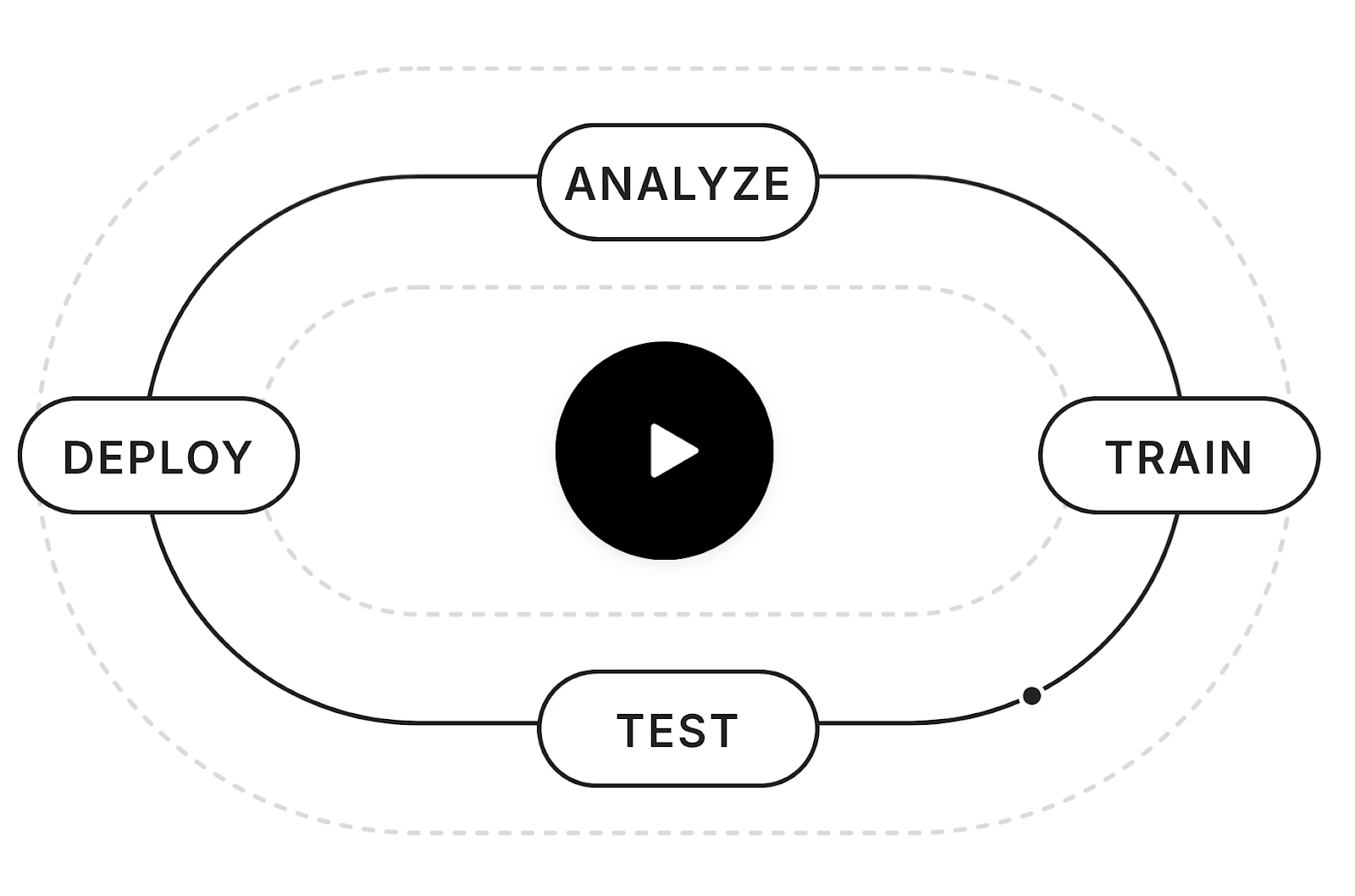

The Four Layers AI Agents Need to Build Trust and Drive Customer Adoption in Customer Support

Image extracted from the Fin website.

Are you considering adding a customer support agent to your coworking website? Before launching it, have you defined the capabilities, guardrails, and workflows it needs to deliver a consistent, reliable, and high-quality support experience for your members?

Here’s what we’ve learned after a year of building AI agents:

Great customer support doesn’t come from simply adding a chatbot to your website. Without the right foundations, trust breaks down. And without trust, AI adoption simply doesn’t happen.

Every effective AI agent for customer support must be built on four layers:

Train · Test · Deploy · Analyze

In the AI agent market, we're seeing a clear trend toward building solutions that integrate:

Training agents with business-specific knowledge

Prompt customization and agent behavior control

Deployment across multiple channels (web, email, etc.)

Basic analytics on agent behavior (conversations, resolution rates, usage metrics, etc.)

All of that is ok. But there’s a critical layer that many AI Agent solutions are still ignoring:

Continuous monitoring of agent response quality over time.

Many teams are still testing their AI manually, which leads to:

Inconsistent results

Model updates breaking optimized workflows

No traceability of performance over time

Silent degradation after prompt changes

Knowledge base updates negatively impacting quality

When there is no systematic evaluation layer, the consequence is clear: customer trust decreases.

Some solutions in the market are already moving in this direction:

Intercom Fin, with simulation and testing tools to systematically evaluate agent behavior.

OpenAI Evals, a simple way to create evaluations and test prompts and responses based on content, style, and instruction criteria.

In AI Customer Support Agents, Trust is the Product.

Beyond Coworking: AI to Watch

FIN: A Platform for Building AI Customer Support Agents

Let’s explore how this four-layer framework comes together in practice through Fin.

Train · Test · Deploy · Analyze

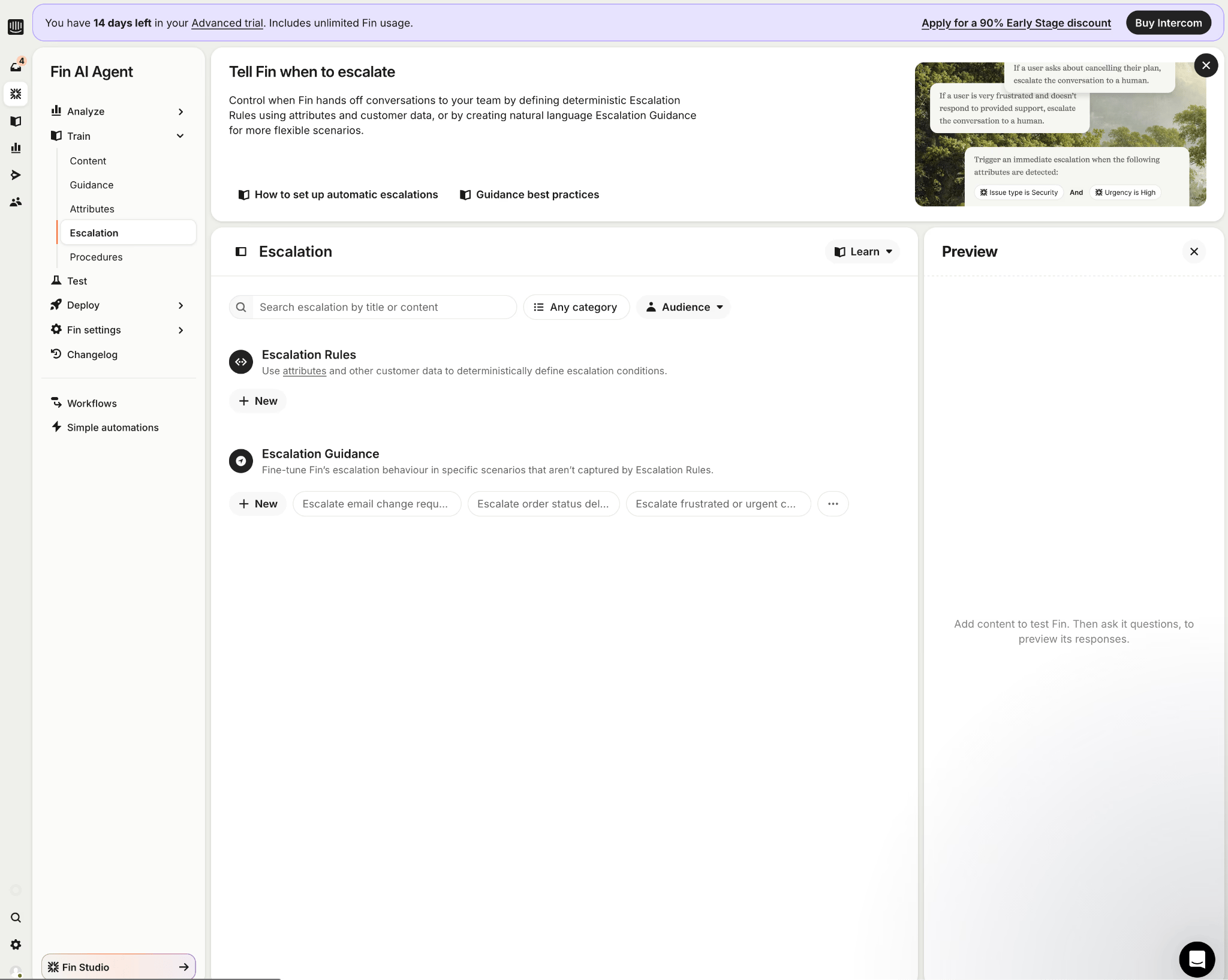

1. Train

This is the layer where the agent is trained by providing the necessary business knowledge, resources, data, and context, as well as defining its behavior.

It allows you to upload and sync content from different sources and integrate with tools such as Zendesk, Notion, Guru, Confluence, Salesforce, among others.

In this layer, you also define:

The agent’s communication style

Operating rules

Cases and scenarios where human intervention is required

Key functionalities

1. Content (Knowledge Base)

Management of the knowledge base with articles, documentation, and integrations. This information is used to train the agent with business context.

2. Guidance

Allows you to customize how Fin responds: tone, style, and communication structure.

3. Attributes

Fin can be trained to detect attributes in each conversation (issue type, urgency, sentiment, etc.). These attributes enable intelligent routing and escalation to the appropriate team.

4. Escalation

Definition of rules so that Fin escalates conversations to the team when necessary. Escalation to human teams can be done through deterministic rules based on attributes and customer data, or predefined scenarios.

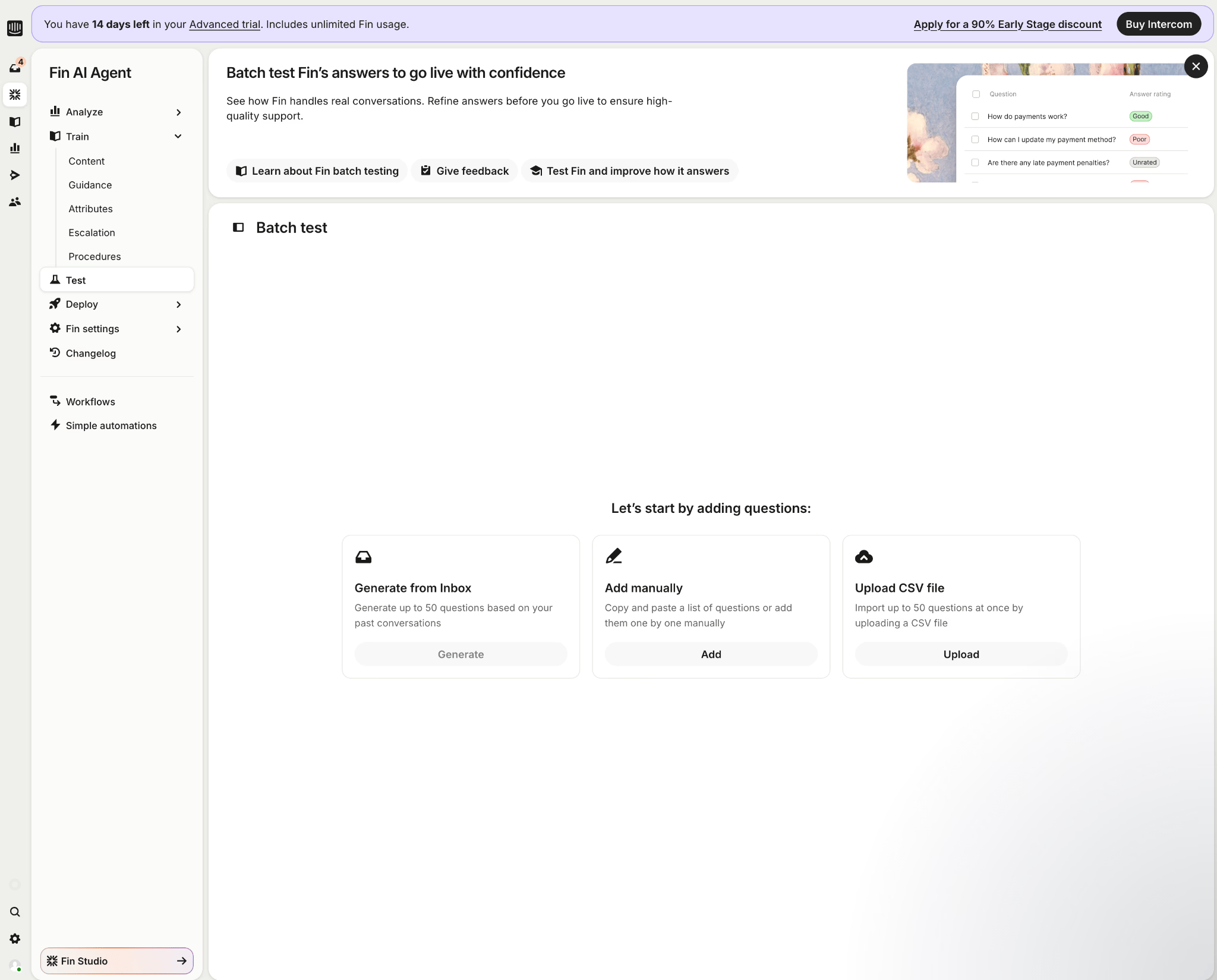

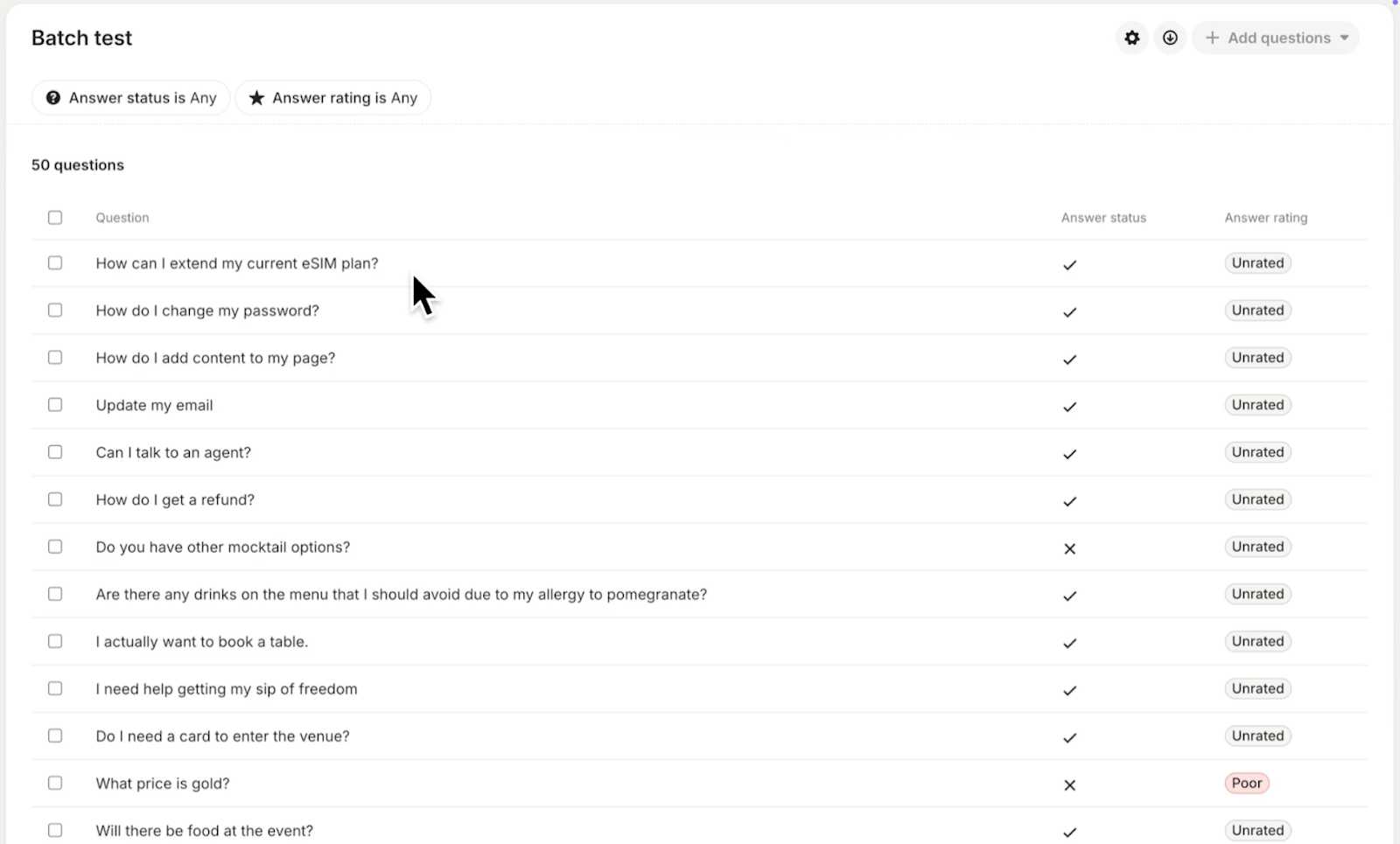

2. Test

This layer allows you to validate the agent’s behavior before deploying it to production, with the main objective of building confidence in the quality of its responses.

Fin enables you to simulate real customer questions and evaluate:

Response accuracy

Content used in the response

Applied guidance

Tone and consistency

Key functionalities

Automatic question generation based on historical conversations or manual upload.

Access to the details of each response: what content was used and why.

Response evaluation with ratings: Good / Acceptable / Poor, including reasons and suggestions for improvement.

Ability to add notes that are saved to improve the training process.

Scenario and topic based testing: onboarding, top support questions, technical issues, premium customers, etc.

Segmented testing by user type (Lead, Customer) or region (US, UK, Europe), to validate response consistency (content, tone, etc.).

Example of a set of questions to evaluate:

3. Deploy

Fin can be deployed across multiple channels:

1. Chat

Fin assists customers in real time, responds, and escalates when necessary.

It supports channels such as web chat, WhatsApp, SMS, Facebook/Instagram, Messenger, and Slack.

2. Email

Interprets incoming emails, responds using the content leveraged during training, and escalates more complex cases to the team.

3. Phone

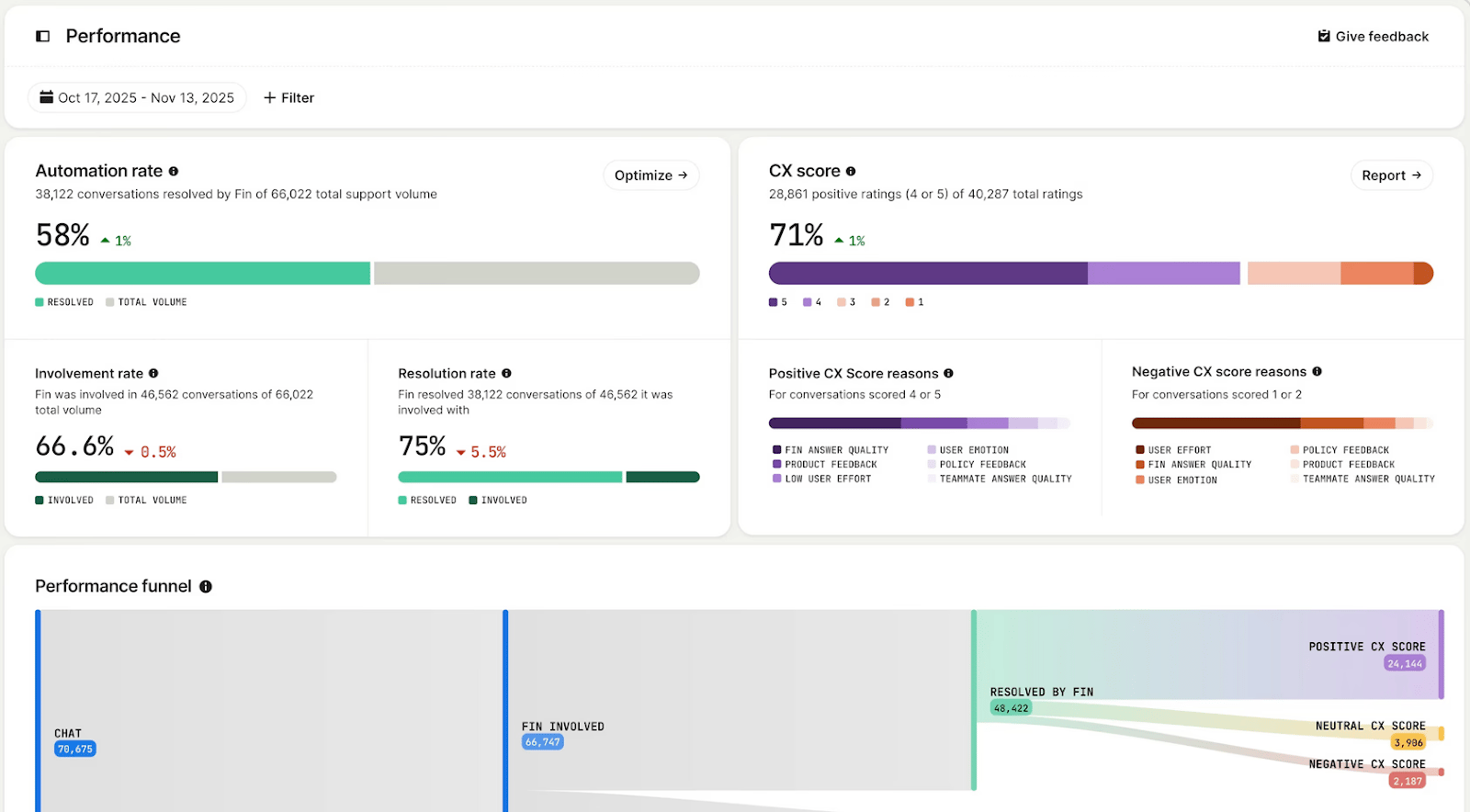

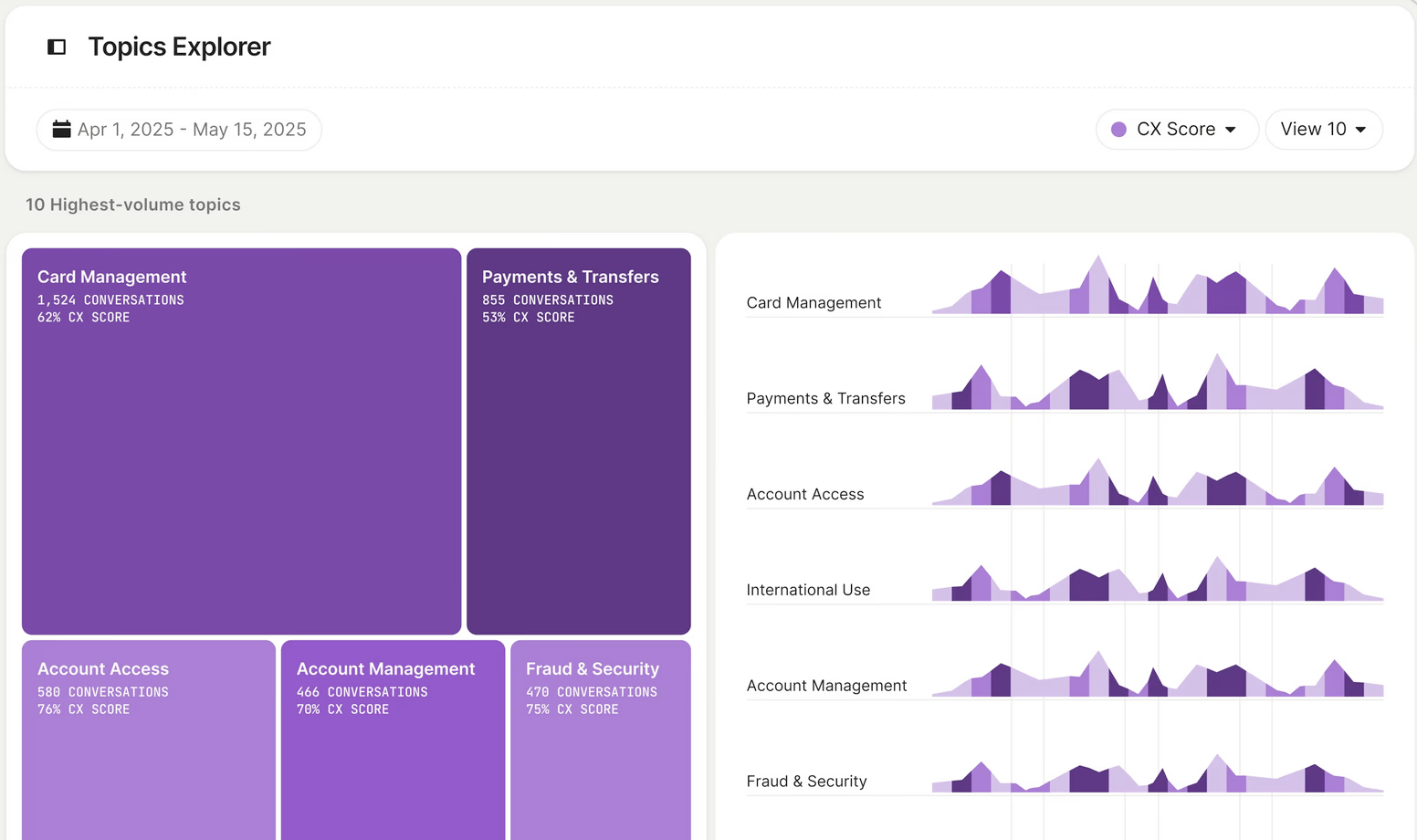

4. Analyze

A layer designed to measure and optimize the agent’s performance.

It allows you to visualize metrics such as:

Resolution rate

Involvement rate

Customer experience score

Topic trends

Knowledge gaps

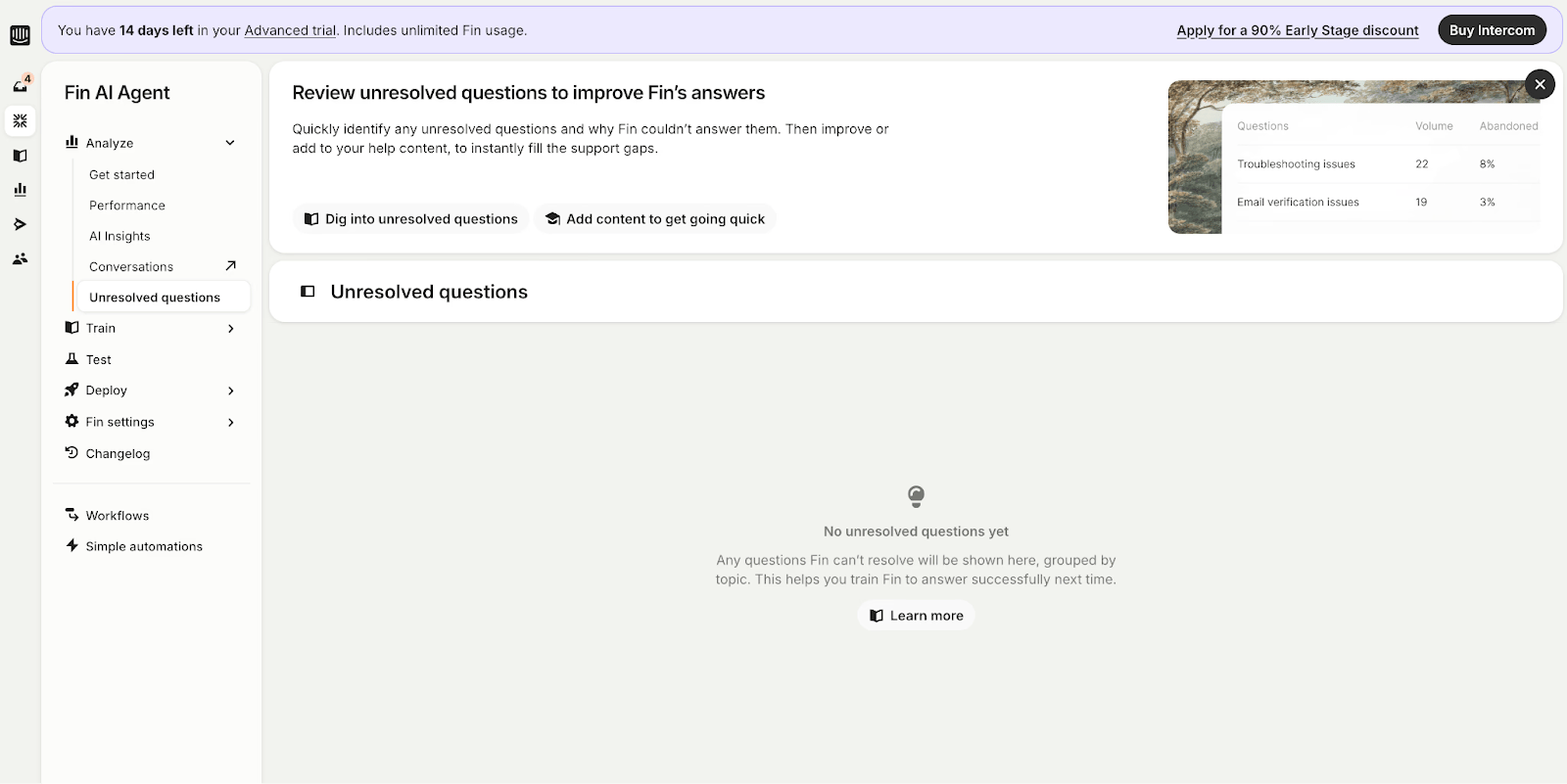

Key functionalities

1. Performance

Dashboard with KPIs and suggested actions to improve the agent’s responses.

2. AI Insights & Conversation Topics

Provides a unified view of support handled by Fin and by teams, identifying patterns in question topics, recommendations, and improvement opportunities.

3. Unresolved Questions

Identifies unanswered questions and explains why Fin was unable to respond.

Very useful for detecting content gaps and improving the knowledge base.

The Analytics Layer turns data into continuous improvement.

We hope Fin's analysis has given you the clarity and insights needed to understand exactly what it takes to run a customer support agent, and the benefit each layer brings.

Final Thoughts

AI agents are quickly moving beyond conversational interfaces into systems that can execute specialized tasks and operate in production environments.

On one side, capabilities like Claude Skills show how agents are becoming more useful by learning structured workflows, organizational knowledge, and repeatable processes. Instead of responding generically, AI can now apply domain-specific playbooks that reflect how teams actually work.

On the other side, deploying AI agents successfully requires more than capability, it requires trust. Without trust, AI adoption simply doesn’t happen.

That’s why the four-layer framework we explored is so important. Without structured training, systematic testing and continuous evaluation, controlled deployment, and analytics to improve performance over time, even powerful AI systems can quickly become unreliable. These four layers are what we believe ultimately drive customer adoption and build trust in AI agents.