Table of Contents

AI is becoming a bigger part of how coworking spaces run every day. AI is advancing fast, and with more businesses relying on AI tools, understanding how these systems perform has never been more important.

This edition of Latest AI News & Trends looks at AI Evals, the structured way to measure whether an AI model is accurate, consistent, and reliable. We also explore why evals matter for coworking operators, how Nexudus is helping the industry access better data, and how new AI tools are reshaping real-time operations in flexible workspaces.

Let’s dive in.

Latest AI News & Trends

What AI Evals are and why they’re so relevant right now

More and more companies are building AI-based products and services, but very few have a truly accurate understanding of whether their LLM model is actually working as it should.

Does it respond to prompts accurately?

Does it follow instructions consistently?

Does it remain stable across versions?

Does its performance degrade over time?

Does it respect the tone and style defined in the prompts?

Without structured evaluation, it becomes nearly impossible to answer these questions. And this is where AI evaluations come in.

What does it mean to evaluate an AI model?

Evaluating is not just manually checking if the model “seems” to respond well. Evaluation involves:

Defining what we want to measure and why.

Establishing metrics, quality criteria, and the necessary data.

Designing how results will be visualized, ensuring traceability and a clear view of model performance over time.

Many teams still test their AI manually, which leads to:

Inconsistent results.

Model changes that break previously optimized workflows.

Lack of traceability of performance over time.

Prompt adjustments that degrade quality without anyone noticing until it’s too late.

If you work with AI, this probably sounds familiar.

AI Evals

Evals systematically test model outputs to verify that they meet the content, style, and functionality criteria defined in the prompts.

Building evals to understand how your LLM-based applications behave, especially when updating or testing new models, is an essential component of developing reliable AI applications.

How to create and run Evals with OpenAI

OpenAI includes the Datasets functionality as a fast and simple way to create evals and test prompts. The workflow is straightforward:

1. Upload a CSV

This dataset becomes the foundation for your tests. Each column can be dynamically integrated into the prompt.

2. Build a prompt

From the dashboard, you can create different prompts that reuse the same dataset.

Example: if the dataset includes a company column, your prompt could be:

“Generate a detailed financial report on the company {{company}}.”

3. Generate and annotate outputs

With the data and prompt configured, you can generate model outputs to analyze how the model performs the task.

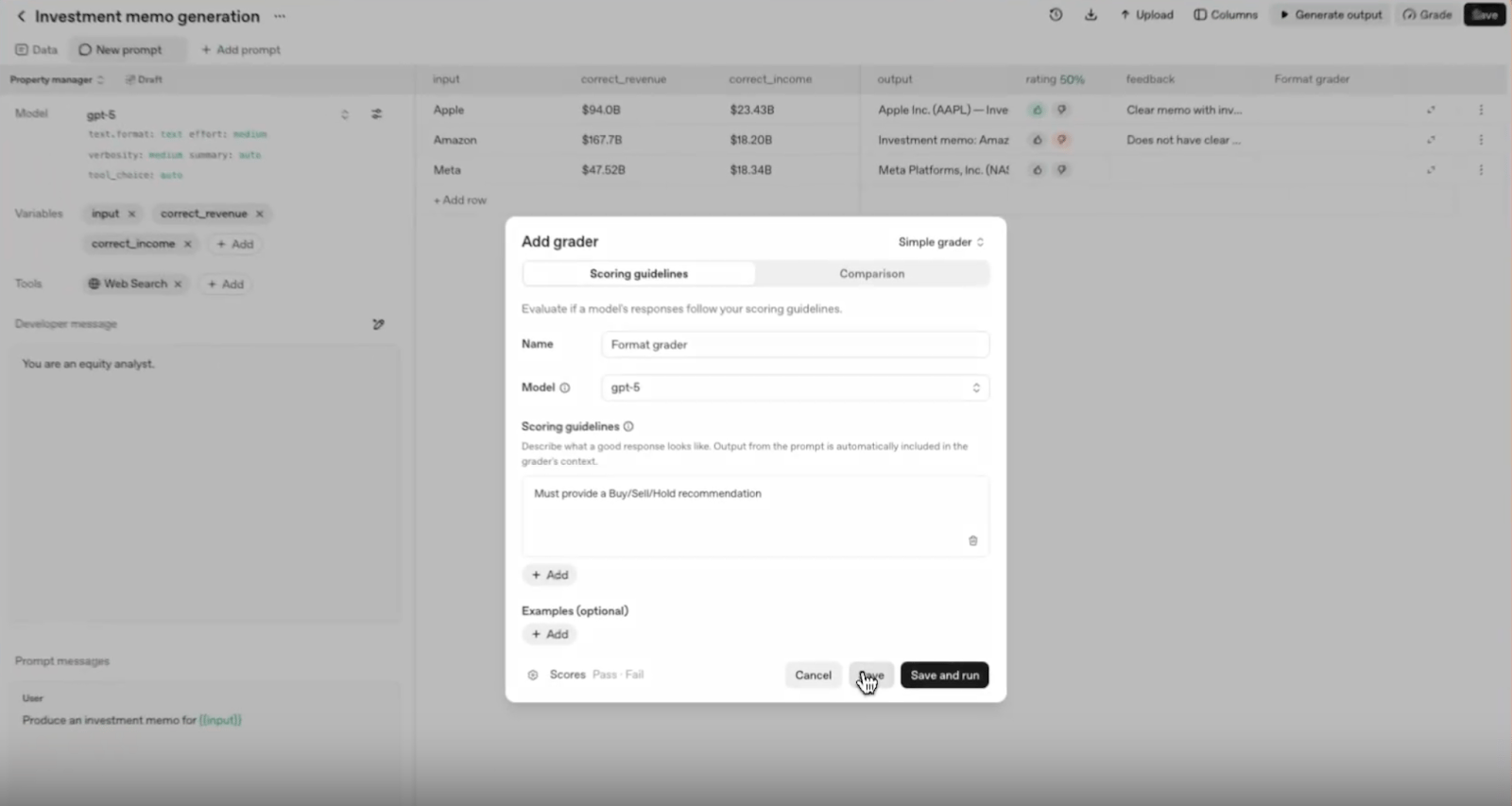

Screenshot of Evals by OpenAI

You can then annotate these outputs so the system can improve its performance over time.

Annotations are a fundamental part of evaluating and improving model outputs. Good annotation:

Serves as the ground truth for desired model behavior, even in very specific cases, including subjective elements such as style and tone.

Helps diagnose prompt weaknesses, especially in edge cases.

Ensures graders stay aligned with your intended behavior.

You can use various types of annotations:

Good/Bad evaluation, indicating your assessment of the output.

Detailed comments on each model output.

Custom categories.

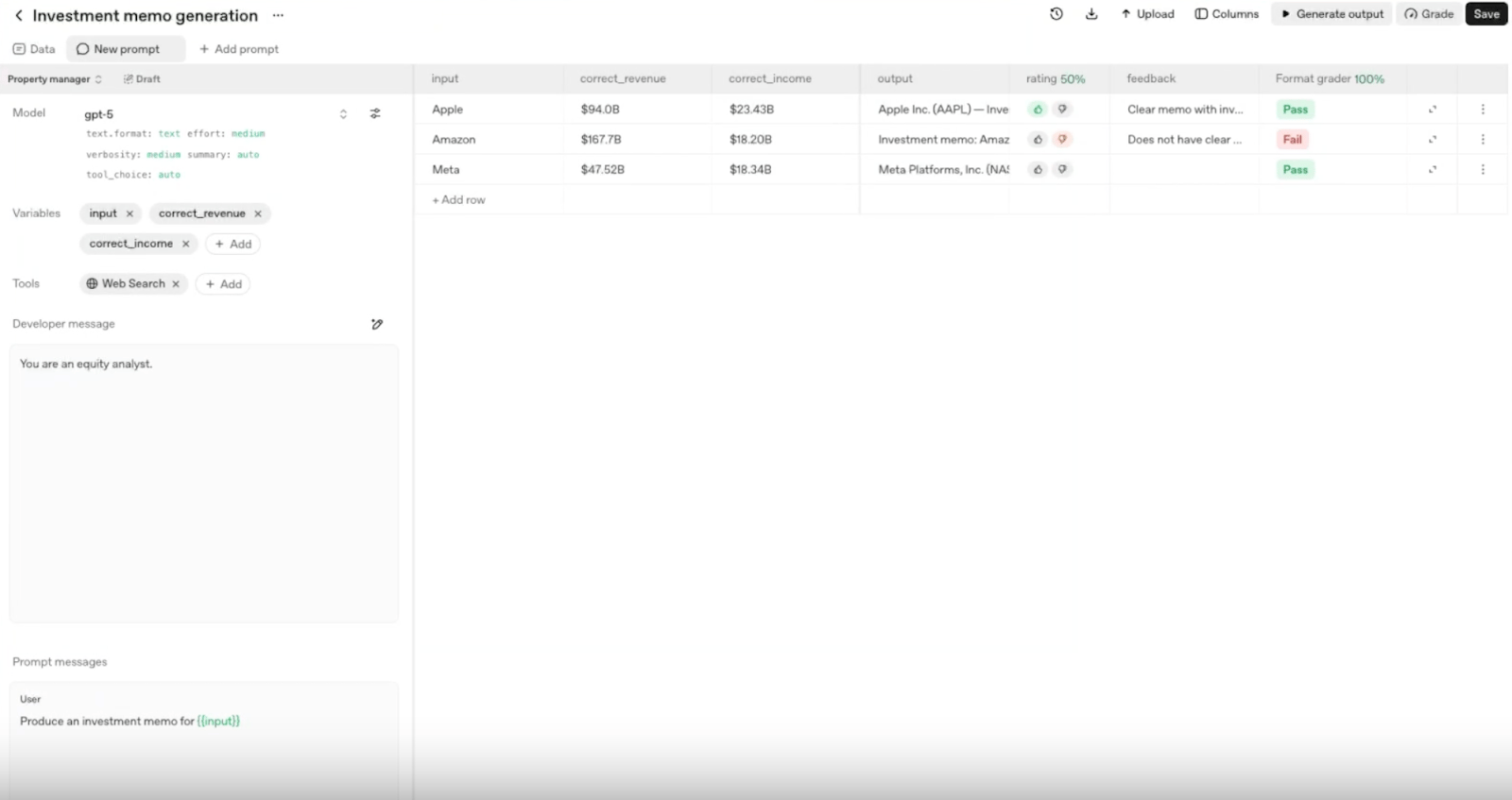

4. Add graders

Graders are a way to evaluate your model's performance against reference answers.

While human annotations are essential, automated graders make it possible to scale the evaluation process. Depending on how they’re configured, they can evaluate:

Whether the evaluation process generated by the LLM remains aligned with the manually defined labeling process.

Whether a new prompt improves or worsens results.

Whether a model change introduces regressions.

Whether changes to model parameters produce improvements.

Whether new data cases reveal flaws in the original prompt.

Screenshot of Evals by OpenAI

Screenshot of Evals by OpenAI

Evals API

For large-scale evaluations, continuous monitoring, and version-to-version comparison, OpenAI offers the Evals API.

With it you can:

Run evaluations asynchronously.

Handle large volumes of data.

Automatically detect regressions across prompts or models.

Build a continuous and automated quality system.

If you’re building AI products, evaluations are not optional.

Evals allow you to:

Test new prompts before moving them to production.

Validate whether a model change introduces regressions.

Measure improvements in accuracy, style, tone, content, or functionality.

Run hundreds of automated tests.

Monitor performance over time and across versions.

Continuously understand model behavior and identify degradation.

The real goal of AI Evals is to precisely understand how your model behaves, where it fails, where it improves, and how to make it more reliable with every iteration.

Are you already evaluating your AI models in a structured way, or are you still relying mostly on manual testing?

What kinds of Evals have you implemented so far, and what insights have you gained about model behavior to support continuous improvement?

A 6-Step Framework for Evaluating AI Systems

This is the 6-step process we use to conduct AI Evaluations, combining human evaluation and automatic evaluation with LLMs. A hybrid, scalable, production-oriented approach.

We start with a very basic prompt; it’s just the starting point. Later on, analyzing the responses gives us insights to improve both the prompt and the knowledge base that the LLMs use in their answers.

We define clear acceptance criteria and a scoring system for the AI’s responses (Bad, Average, Great).

We select 100 real user inputs and analyze the quality of the AI model’s responses. For each defined acceptance criterion (for example, tone, relevance, etc.), We perform manual labeling and add qualitative feedback explaining why the response is good or not. This process helps improve the initial prompt and leads to the creation of a “golden dataset” based on human evaluation (Human Eval).

Once the initial dataset with manual labels is created, we apply an evaluation using LLMs (LLM-as-Judge Eval). The goal is to validate that the LLM classifies responses consistently with the human labeling process. When this validation is successful for each criterion, the evaluation can be reliably scaled.

Next, We use a larger sample (around 250 user inputs) to analyze the model’s responses. If quality remains stable at this volume, we continue scaling the solution.

Finally, we productionize the solution for a small subset of users through A/B tests and run continuous evaluation (User Eval) of the quality of the AI’s responses. If response quality meets the defined acceptance criteria, the solution is progressively scaled to a larger set of users.

Why Evals Matter for Coworking Operators

Coworking spaces are increasingly using AI to generate and prioritize leads, support members, analyze competitors, and streamline workflows. As these tools take on more responsibility, evals ensure they stay accurate and aligned with your business.

1. Reliable Member Support

Evals help confirm that AI assistants consistently give correct answers about pricing, memberships, policies, and availability.

2. Better Personalization

They test how well an AI understands your brand voice, usage patterns, and member preferences, leading to more tailored recommendations.

3. Trust and Transparency

As members interact more with AI, evals ensure responses remain clear, helpful, and on-brand.

In this new AI era, evaluations act as your quality-control system, ensuring your AI-powered workspace delivers a trusted, accurate, consistent, and reliable customer experience.

Inside Nexudus: Our Approach to AI

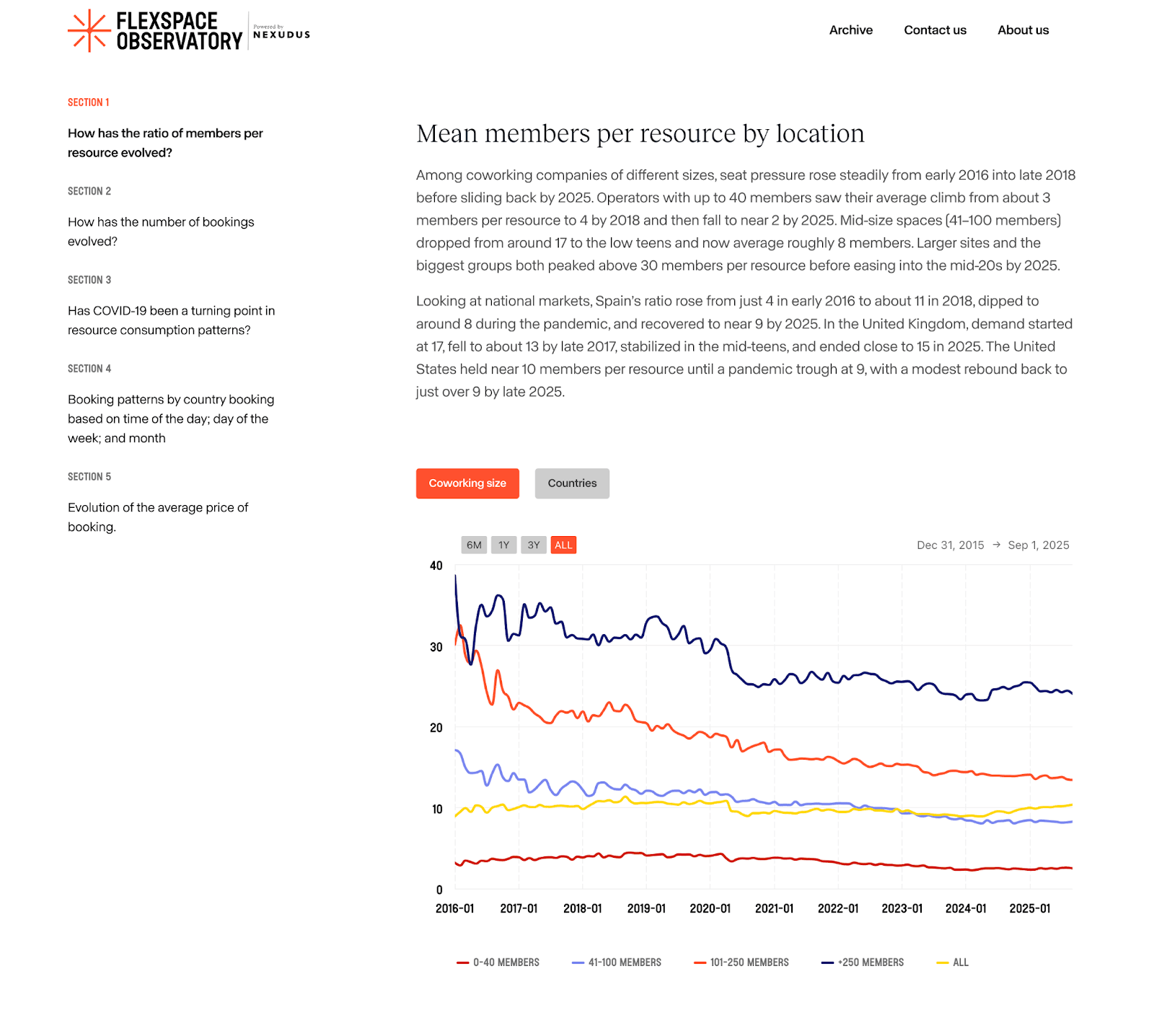

The FlexSpace Observatory is a Nexudus initiative designed to bring greater transparency to the coworking and flexible workspace industry through data. Drawing on more than a decade of experience, a global footprint spanning over 90 countries, and insights from 3,000+ coworking spaces, Nexudus offers one of the most comprehensive data resources in the sector.

Updated quarterly, each FlexSpace Observatory report offers deep analysis of global and regional trends, including:

Resource Demand Patterns: How desks, meeting rooms, and amenities are used across markets, with detailed insights into the UK, US, and Spain.

Member Tenure Analysis: Data on member retention, stay duration, and the factors that influence loyalty and revenue stability.

These insights act as industry benchmarks, helping operators compare their performance and better understand workspace usage trends.

The FlexSpace Observatory is valuable for anyone involved in coworking operations, strategy, sales, or community building. It helps businesses stay competitive, improve member experiences, uncover growth opportunities, and make informed decisions backed by data.

Built from anonymized data across thousands of spaces worldwide, the FlexSpace Observatory is an ongoing research project, not a one-time study, ensuring the industry always has access to fresh, reliable, and relevant information.

Voice Agents: AI to Watch

We enjoyed reading this story shared by Daniel Wesson and published in This Week in Coworking, as it highlights a very practical use case for AI in day-to-day coworking operations.

Here’s what stood out most to us in his story:

Anyone who runs a coworking space knows the moments that truly test an operation rarely show up during clean, convenient business hours. They arrive when everyone is off the clock, and mentally checked out for the weekend.

This story was no different.

It was a Friday at 6:30 PM. After a long week, the team had just settled in at home when a text notification came through.

Their AI phone system had intercepted a call from a tenant working late. He’d noticed water pooling in the corridor, the kind of mess any coworking operator recognizes instantly.

Without that alert?

The team would have walked into a full-blown disaster on Monday morning.

And here’s the part every operator understands:

Before they introduced automation, that call would have gone straight to voicemail. And voicemail doesn’t help anyone. It doesn’t prioritize. It doesn’t escalate. It doesn’t take action. It simply records problems while they quietly turn into emergencies.

Then came the moment that makes every coworking manager nod in recognition:

The caller did what people always do: “Can I talk to someone?”

But when he realized the AI actually understood him, the system kicked in: it asked questions, gathered details, updated his CRM profile, and sent an urgent alert to the team.

Within 10 minutes, staff were back in the building addressing the issue.

That’s the reality of running a coworking space:

You can’t be everywhere. You can’t answer phones 24/7.

But emergencies, opportunities, and member needs don’t wait until business hours.

Member locked out

Tour requests at weird hours

Building issues

Deliveries

Prospects choosing speed over everything

Before automation, all of it goes to voicemail.

And voicemail is where opportunities tend to die.

The Opportunity

We’re entering a new era where AI can do more than “take a message.”

It can interpret, prioritize, route, follow up, book, notify, update CRMs, and act instantly, at all hours.

This means coworking operators can finally offer the responsiveness of a hotel front desk, without paying someone to stand there 24/7.

It means no more:

Missed tours

Lost leads

Bottlenecked sales cycles

Angry members

Weekend emergencies turning into Monday disasters

And it means spaces can run smoothly, even when teams are off the clock.

Coworking is a hospitality business.

Your members expect responsiveness.

Your prospects expect speed.

Your building needs monitoring even when you’re not there.

And sometimes, the best investment isn’t in nicer furniture, it’s in the systems that protect your space, elevate your service, and unlock more hours in your day.

AI isn’t replacing operators. It’s making operators radically more effective.

It’s giving you more time to do what actually matters: building community.

The “AI Receptionist” for Coworking Operators

There are many tools on the market today, including Beside, Newo.ai, AssemblyAI, and ElevenLabs.io, each offering powerful voice and AI capabilities.

Answers every call immediately, day or night

Handles member questions

Updates CRM records automatically

Flags emergencies in real time

Follows up with callers so nothing falls through the cracks

Books tours

Instead of voicemail capturing words, the AI captures intent and urgency, and acts on it.

Have you already tried any AI automation system?

Final Thoughts

Reliable AI doesn’t happen by accident, it requires ongoing evaluation, clear metrics, and the right tools to monitor performance over time. For coworking operators, this isn’t just technical detail; it’s what ensures accurate member support, personalized experiences, and smooth operations, even outside business hours.

As AI takes on a bigger role across the industry, structured evals and always-on automation will be essential to delivering consistent service, protecting workspaces, and giving teams more time to focus on what truly matters: their community.