Table of Contents

Welcome to a new edition of Coworkings AI.

This time, we’re covering:

SaaS tools are becoming interactive inside Claude. Is this about to change how we work and how software is used?

Why LLMs and Interfaces Don’t Create Moats. Context and Data do

How Nexudus is unifying Customer Context across the entire lifecycle

Let’s dive in.

Latest AI News & Trends

SaaS tools are becoming interactive inside Claude. Is this about to change how we work and how software is used?

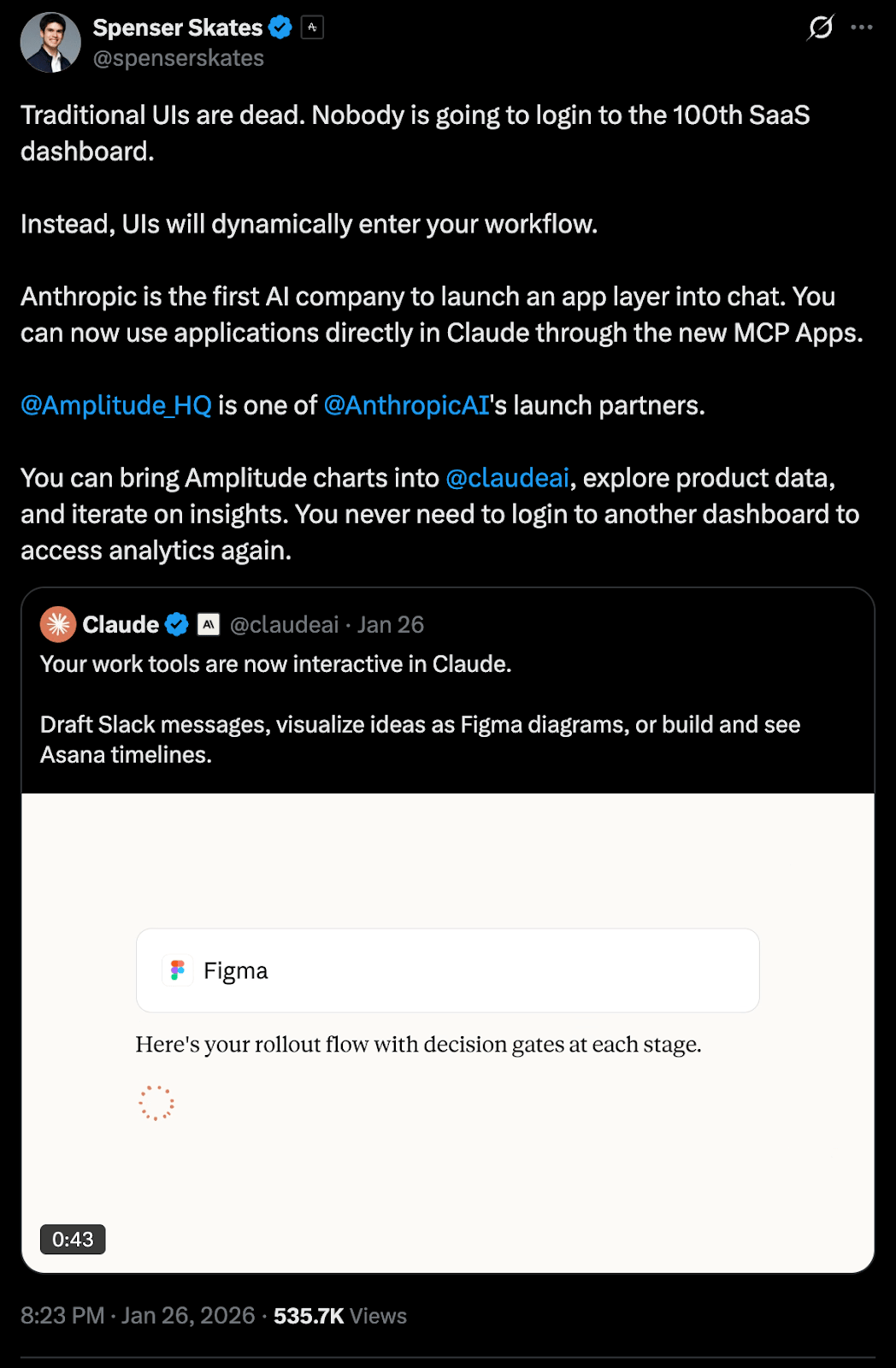

A few weeks ago, Spenser Skates, CEO and co-founder of Amplitude, shared this post on X:

“Traditional UIs are dead. Nobody is going to login to the 100th SaaS dashboard.”

His point was, instead of users constantly switching between tools, tools are beginning to move directly into the user’s workflow.

With Claude and MCP, this is already happening. You can now run Amplitude analyses inside Claude, without logging into yet another dashboard.

What you can already do directly inside Claude

Several SaaS tools now operate as interactive layers within Claude, marking the beginning of a rapidly expanding ecosystem:

Amplitude

Build analytics charts, explore trends, tweak parameters, and uncover customer insights, all conversationally.Asana

Turn chats into projects, tasks, and timelines your team can execute immediately.Canva

Create presentation outlines, then customize branding and design in real time to produce client-ready decks.Clay

Research companies, find contacts (emails, phone numbers), pull firmographic data, and draft personalized outreach, all in one flow.monday.com

Manage projects, update boards, assign tasks intelligently, and visualize progress with insights.Slack

Search conversations for context, generate message drafts, format them your way, and review before posting.

The technology enabling this shift

The underlying technology is built on the Model Context Protocol (MCP), the open standard for connecting tools to AI applications. MCP Apps is a new extension to MCP that lets any MCP server deliver an interactive interface within any supporting AI product, not just Claude.

The bigger question this raises for SaaS

This evolution forces us to rethink how SaaS products create, and capture, value.

If we can access most SaaS tools through AI super-apps like Claude, ChatGPT, or Gemini, the economics and product boundaries start to blur.

Take a simple example:

If I’m an Amplitude customer and I consume its full value directly inside Claude’s workflow:

What am I actually paying Amplitude for? access to the data?

Do SaaS products become backend services for AI providers?

Are SaaS tools evolving into data infrastructure that LLMs rely on to generate better outcomes?

Does UI differentiation matter less than data quality, reliability, and depth of integration?

Will traditional UI products fade in favor of conversational, embedded experiences?

For SaaS companies, this shift creates a real strategic dilemma:

Do companies build an MCP connector and accept becoming a backend service for AI super-apps like ChatGPT or Claude or do they hold onto their standalone product experience and risk competitors gaining an advantage through native AI integrations?

This doesn’t mean SaaS disappears.

It means its role may change, from software you use to intelligence that shows up exactly when you need it.

And that shift could redefine pricing, product strategy, and what “owning the user” really means in an AI world.

Bringing it closer to home

In the coworking software industry, how might core platforms be embedded into AI super-apps like Claude or ChatGPT?

Will these AI super-apps play a meaningful role in how coworking spaces are operated, optimized, and managed day to day?

We don’t have a definitive take on where this will ultimately land, and that’s okay. What matters right now is paying attention. The way SaaS tools are moving into AI workflows is still evolving, and the implications won’t be obvious overnight. But for operators staying aware of these shifts is critical. Even if the answers aren’t clear yet, the direction is worth watching closely.

AI Signals

Why LLMs and Interfaces Don’t Create Moats. Context Does. Data Does.

Today, all companies, large coworking operators and boutique spaces alike, have access to the same Large Language Models. Claude, ChatGPT, and Gemini are no longer exclusive tools reserved for a few advanced teams; they’re broadly available to everyone.

Shubham Saboo, Senior AI Product Manager at Google, recently explored this idea on X.

LLMs are becoming commodities.

Prices keep dropping. Cost per token continues to decline.

And the performance gap between models keeps narrowing.

This shift changes the most important question.

It’s no longer: Which model are you using?

If every company has access to the same models:

Where does real differentiation come from?

How can brands use LLMs and AI to truly set themselves apart?

What is the actual moat?

The answer isn’t the model.

It’s data and context.

HubSpot CEO Yamini Rangan reinforced this idea in a post on LinkedIn

“We all know you need good data for AI, but it’s not enough—you need shared context.

Data tells you what happened. Shared context tells you why it happened and what to do next.

For AI to be genuinely useful, it can’t just consume more data. It needs the same context that we have to make decisions.”

Dharmesh Shah, Founder and CTO of HubSpot, also built on this perspective on LinkedIn:

“In most companies, context lives in databases, docs, message threads… and people's heads. It's scattered and fragmented. That's a problem because without shared context, AI is just a very smart intern on their first day at work.”

In a world where models will be interchangeable, shared context is what turns AI from a generic assistant into a system that actually understands your business.”

Models will be Commodities. Context not.

In coworking & flex spaces, operators want to use AI for:

Member support

Ticket resolution

Sales qualification and tours

Community communication

Internal operations

Resources and space management

Let’s imagine a coworking operator wants to build a customer support agent and decides to create one using Claude or ChatGPT agents.

Prompt:

“Build a customer support agent for a coworking space.”

Any modern LLM will return something reasonable, but completely generic.

Now compare it with this:

“Build a support agent for a coworking operator with the following knowledge base:

A historical database of real member questions (Wi-Fi, meeting rooms, access, billing)

Brand tone guidelines

Clear edge cases that require human escalation (member conflicts, policy exceptions, legal issues)

A definition of what ‘successfully resolved’ means from the member’s and operator’s perspectives

Different rules, success criteria, and expectations for flex members, private offices, and enterprise clients

Same model. Completely different results.

The difference wasn’t the prompt length or the task description, so the value isn’t in telling the model what to do. It’s in helping it understand your specific business context.

The difference was shared context and data.

That context is the moat.

Defining Context in the Coworking Industry

In the coworking industry, “context” is not a single document, it’s a structured set of foundational definitions that shape how your business operates and how AI agents should behave within it.

Before building AI agents (for example, in Claude Code), you should first define these foundational documents. Once they exist, they become reusable context.

This means you no longer write prompts from scratch; instead, you build agents on top of deeply defined business knowledge.

The result is agents that classify issues with high confidence, clearly explain their reasoning, and behave consistently with your brand and policies.

Context in a coworking business typically breaks down into the following categories:

1. Context on Your Customers

Your agents must understand who they are talking to. Different member types have different expectations, tolerance levels, and definitions of “good service.”

Provide clear context on:

Who your customers are

How they became customers

Which customers have high lifetime value (LTV)

Which customers are more likely to churn

For example:

A freelancer on a flexible plan

A 10-person startup in a private office

An enterprise client on a yearly contract

Each of these members expects a different level of service. Your agents need to recognize these distinctions to respond appropriately.

2. Context on Your Company

This defines how your business communicates and operates.

Include a Brand Voice and Response Style Guide, covering:

Tone (friendly, concise, premium, playful, etc.)

Do / Don’t rules (e.g., “never blame the member”)

Formatting rules (use of bullets, emojis, signatures)

Also include a Knowledge Base, such as:

FAQs

Policies

Pricing

Opening hours

Space rules

Shared resources and meeting rooms

Community norms and conflict handling

This ensures agents respond consistently and accurately.

3. Context on Historical Support Conversations

This reflects how your team actually behaves in the real world.

Include:

Historical support tickets

Real chat transcripts

How staff phrased answers

What was resolved vs. escalated

Label outcomes clearly:

Resolved

Escalated

Complaint

Churn risk

Collect examples of:

Good outputs

Bad outputs

Real edge cases

This grounds your agents in reality, not theory.

4. Context on What “Quality” Means

Define what a “good resolution” looks like for your members.

Create an Escalation and Safety Playbook, including:

Escalation triggers (legal issues, billing disputes, access or security incidents)

What the AI must do: acknowledge the issue, collect essential information, and route to a human

What the AI must never do: give legal advice, make accusations, or grant policy exceptions without approval

Add:

Severity levels (P0–P3) with routing instructions

Clear policy and decision rules

Rules written in “if / then” format

Exceptions and who can approve them

Logic by member type (flex, private office, enterprise, etc)

Examples of allowed vs. not-allowed outcomes

5. Constraint Context

Constraints are context too.

Clearly define what the agent should not do. These constraints represent the real limitations that shape acceptable solutions and protect your business, your staff, and your members.

Well-designed constraints are just as important as capabilities.

Prompting will get easier. Models will require fewer words.

But context will always matter.

Coworking operators who treat context as a first priority will build better and more reliable systems.

That’s the real moat.

Inside Nexudus: A Data Platform for the Entire Customer Lifecycle

Nexudus: How Nexudus is unifying Customer Context across the entire lifecycle

In coworking and flex spaces, AI is only as good as the context it’s built on. That context doesn’t come from prompts, it comes from unified, reliable data across the entire customer lifecycle.

That’s where Nexudus comes in.

Most operators struggle with fragmented data: websites, CRMs, billing systems, access control, bookings, community tools, and marketing automation tools all store information in separate silos. This fragmentation makes it nearly impossible to answer basic questions about members, their journeys, or how spaces are actually used, let alone power meaningful AI agents.

Nexudus Platform is designed to solve this foundational problem by acting as a unified data platform for coworking & flex operators.

At the core is a single source of truth that consolidates customer and space data across the entire lifecycle, from first touchpoint to daily usage and long-term retention. Nexudus integrates data from access control, payments, accounting, Wi-Fi, bookings, community activity, sensors, visitor management, and downstream tools like Slack, Zapier, and HubSpot, eliminating the need for brittle point-to-point integrations.

This unified data layer doesn’t just simplify operations, it creates shared context.

Nexudus structures data across multiple layers of granularity:

Member layer (individual behaviour and preferences)

Account or team layer (how companies use space)

Location layer (resource usage and space performance)

Multi-location layer (portfolio-wide trends and insights)

Across these layers, Nexudus captures three foundational types of data:

Behavioural data (bookings, purchases, access, customer support interactions, feedback)

User profile data (demographic and firmographic context)

Space data (occupancy, environmental conditions, utilisation)

Together, this creates a holistic, end-to-end view of the customer journey, connecting marketing touchpoints, physical space usage, transactions, and customer support interactions into a single, coherent picture.

This shared context is what transforms AI from a generic assistant into a system that can produce consistently more relevant outcomes.

By unifying customer context across the lifecycle, Nexudus provides the foundation for AI agents that understand who a member is, how they use the space, what success looks like for them, and when escalation or human involvement is required.

In a world where models (LLMs) are becoming commodities, Nexudus helps operators build the real moat: structured context, grounded in real operational data that turns AI into REAL business value.

Final Thoughts

AI only delivers real business value when it’s powered by large data and clear context across the entire customer lifecycle.

Without that foundation, even the best AI agents can’t produce meaningful impact.